*** This article was originally published in September 2018, and was updated and republished in June 2021

Outcome statements are change statements. They are critical to ensure you are collecting data that will inform program improvement efforts. They address the key question:

The statements provide the foundation from which all data collection questions will stem. Too often, organizations jump into creating surveys without outcome statements. The result can be asking questions that have nothing to do with understanding program impact or measuring progress. Like throwing darts at a board, hoping something will stick.

The process of creating measurable outcomes requires time, planning and collaboration. They provide direction, so when you’re ready to collect data what you ask is aligned to the outcome statements. You’ll throw darts, and hit a bullseye.

| What Does the Program Do? | What Change is Expected as a Result? | ||

|---|---|---|---|

| Program Activity | Outcome | Outcome | |

| Provide math and science classroom activities for at risk students | Students will improve their attitude toward math and science | Students will increase their interest in math and science | |

At a bare minimum, development and program staff come together to create these statements. Ideally, others are at the table depending upon the size of your organization. Development staff members can use outcome statements in their grant proposals and other fundraising activities as applicable. Program staff typically are the ones to collect the data. The result – development staff have the data they need to report to funders, and program staff have data they need to understand program successes and challenges. Bullseye!

This brief mini guide series goes into more detail on several evaluation subjects. The first one highlights how to create logic models and measurable outcomes.

Do you believe this blog post will help you?

That, my friends, is a leading question. If I as the survey writer put into the question what I think, I am on a subconscious (or maybe even conscience) level make more likely that the survey taker will respond the way I want them to — in this case, it will lead them to answer ‘yes’.

Are you falling into the same trap with your questions? Take a minute, dig out a survey you’re doing now or have done in the past. Review it and look for any questions start with ‘do you believe… or ‘do you think’, because they might be a leading question and might be skewing your survey responses. A big indicator that you might have a leading question in your survey if your response choices are ‘yes’ and ‘no’.

If you do this, don’t worry, you’re not alone. This is the most common mistake in survey design that I see. I have had the privilege of coaching a number of organizations on survey design, and one of the things I do is an in-depth survey review to uncover and avoid mistakes like these. And this particular mistake comes up a lot.

The good news is that there’s an easy fix to leading questions like this. Just remove it and replace it with a scale. Like this:

What do you think about this blog post?

Another option is to simply offer an open-ended question and remove the response options entirely. (Make sure to leave plenty of room for a full answer):

Do you see the difference between the earlier question and the rewrites? Seemingly small tweaks like this can help you make sure your survey is getting helpful, actionable data that you can use.

Avoid the common leading question mistake by re-wording your survey question to be objective, and offer a range of responses on a scale. (I often do three point to five point scales.)

Want more survey tips and tricks? My new book, “Nonprofit Program Evaluation Made Simple”, has an entire chapter dedicated to basic survey design. Every book comes with free access to a companion website that is full of downloadable templates, including a survey design template! Get your copy now on Amazon or at Barnes & Noble.

Want a survey review? Reach out, I’d be happy to help.

Want hands on learning? Register for my virtual Survey Design Made Simple workshop on Thursday, May 13.

** Available for purchase as of January 2021! Purchase now on Amazon or at Barnes & Noble **

In the blur of workshops on program evaluation I’ve taught over the years, there’s one persistent question to which I’ve never had a satisfactory answer: “Chari, have you written a book about this?”

While flattered, I never really took this question seriously. I assumed it was just a kind way for clients and colleagues to encourage me and thank me for my help. My consistent answer was no, no book.

But then, not long ago, I taught a session at the Oregon Program Evaluation Network (OPEN) annual conference. My topic was on how to introduce and integrate program evaluation into a nonprofit’s ongoing work. The conversation was lively, and the questions kept coming. And of course, one of those questions was That Question: did I have a book?

For some reason, instead of shrugging this question off yet again, it struck me differently. Why NOT write a book?!

Sure, I had no idea what writing a book would entail. How to go about publishing it. The logistics of it. Not to mention the mental gymnastics I’d need to do to try and sum up and organize my experiences and learnings gathered over my career of twenty-plus years in the nonprofit program evaluation field. But the more I thought about it, the more the idea appealed to me.

Feeling inspired, I hunkered down and put together my first draft, thinking about what this book could be. I decided to focus on the core of all program evaluation work: how to write an evaluation plan. Painstakingly, I outlined my tried-and-true method of getting from concept to a full-blown plan that an organization can then take and run with.

After a year, I had something I was ready to share and quickly found a publisher. I was equally proud, excited, and nervous.

The good news? They liked it! The scary news? They wanted MORE.

They wanted me to expand the book to include creating the evaluation plan and how to go about implementing it.

For the next six months, I dug deep. The book grew to address all program evaluation steps: creating an evaluation plan, determining the best methodologies to collect data, best practices for creating surveys, guides for managing program data, writing and designing compelling reports, and how to use data to improve programs over time.

Those six months were tough. I admit feeling a bit chained to my computer while I wordsmithed each paragraph and wrote and rewrote whole chapters.

The whole time I wrote, I thought back to the faces of those people who came up to me after trainings over the years, asking if I had a book. I kept my eye on the prize: creating a step-by-step guide for how to do program evaluation for the non-evaluator.

Fast forward to October, when an unexpected turn took the book in a new direction. CharityChannel, which publishes books for nonprofit professionals under imprints such as CharityChannel Press, approached my publisher and asked to take my book under the wing of its new Author Brick Road project. According to CharityChannel CEO Stephen Nill, “Author Brick Road leverages its deep publishing experience to empower visionary and transformative leaders to write and publish books of extraordinary impact, clarity, and usefulness.” It was a good move for everyone, so I made the switch.

I’m proud to say that my book is finally DONE. I’m not going to pretend that it was an easy journey. That it hasn’t challenged me — in both fun and terrible ways. Writing a book is as hard and frustrating as everyone says it is. But it is also truly a joyful, exhilarating, and incredible experience.

I love that my program evaluation work helps take an organization from confused, overwhelmed, and resistant… to recognizing the value in the process and confidently going forward with a plan to collect and share data.

My sincere hope is that my book will help even more organizations do just that. That it will serve as a guide to help nonprofit professionals dip their toe into the incredible world of program evaluation and teach them that not only can they DO nonprofit evaluation, but that they SHOULD. And not just because some funder tells you to… but because at the end of the day, nonprofit professionals want to KNOW that their programs positively impact the communities they love and serve.

*** UPDATE AS OF JANUARY 20, 2021:

I’m thrilled to announce that Nonprofit Program Evaluation Made Simple: Get your data. Show your impact. Improve your programs is now available for purchase. (Bonus: every book purchase includes access to a book companion website, which has samples and templates you can download and use right away!) Visit my book page now for more information, or click below to buy now.

In4All was able to adapt quickly when COVID-19 ripped through Portland, Oregon in March 2020. They closed their offices March 13, and were determined not to let this disruption stop their important work to bring educators and businesses together to impact students who are historically under-served at critical points in their K-12 education.

Program Adjustment: Their STEM Connect ™ program brings business professionals into 4th and 5th grade classrooms. When COVID transitioned all schools to an online learning environment, their STEM Connect ™ program did the same.

There was one major change they needed to make: for in-person classes, the business volunteers would bring materials for students to work with in groups to sessions. To adjust to an online learning environment, volunteers adjusted to allow for students to use individual kits that allow them to do the activities while physically distances or even at home. That way, students would still get the value of doing the STEM activity at home with instruction from the STEM business volunteer via the school’s online platform.

Evaluation Adjustment: The evaluation process, happily, did not require major adjustments in order to continue as planned. Teachers and STEM business volunteers still were asked to complete mid-year and summative surveys to understand challenges, successes, and opportunities. The one change, however, was the addition of the following question:

“When you think about our partnership next year, what excites you? Brings fear?”

This cleverly worded question was able to indirectly gauge how respondents were feeling about COVID-19 without calling attention directly to the issue. The question allowed In4All staff to better understand how teachers and volunteers were feeling about what next year might look like.

Outcomes: “The findings weren’t surprising,” said Elaine Charpentier Philippi, In4All ‘s Executive Director. “It showed concerned about community spread, and in-person engagement. It helped us make a decision based on findings to target virtual experiences for all students next year.”

Using these mid-year surveys and responses as a basis, the In4All program impact manager, in partnership with the ad-hoc program committee and In4All Staff, re-wrote the curriculum to adapt it to online.

By using input from teachers, volunteers and students, In4All remains responsive to what everyone needs to be successful. Their program planning is based on data, helping them to quickly adapt what they’re doing and how they’re doing it to meet everyone’s needs.

Congratulations to the determined and innovative In4All team for finding ways to adapt and continue your important work during such a challenging time – it’s been an honor to work alongside you and to help you use data to make effective program planning decisions!

Learn more about In4All and their programs by visiting in4all.org.

Want to learn how YOU can use data to make program planning decisions during COVID-19? Contact me and I’d love to help!

Since the global coronavirus pandemic turned the world upside down, I can’t help but wonder about how all of this will impact current and future program evaluation work.

Here are just a few of the questions I have heard from my clients, colleagues, nonprofits, foundations, and the evaluation sector at large:

While I certainly don’t have all the answers to these questions, here’s what I do know:

My current work with Metro, the regional government agency for the greater Portland, Oregon area, is an example of how to move forward with program evaluation during these unusual times. The project is to evaluate Metro’s Investment and Innovation Program. This three-year pilot grant program is designed to reduce waste in the Portland Metro Area through nonprofit and business grants.

Instead of letting the pandemic halt progress, we have quickly adapted how we worked together.

Instead of in-person working sessions and my trusty box of meeting facilitation tools (my go-tos include flip charts, markers, and hands on mini-activities!), we quickly shifted to online meetings and email.

Here are just a few things we’ve found that work well:

All our meetings have strong, clear agendas. We use video calls to maintain a more personal working environment. We are meeting more frequently now than before, which helps us all stay on the same page. We stick to our meeting start and end times, which means we stay on task, productive, and focused. We include some “check in” time at the beginning of each meeting so we can share any personal updates and stay connected on a social level. When looking at materials, one person shares their screen so we’re all looking at the same thing at the same time (instead of each of us opening our own document on our computers). There’s always a designated note taker, who captures ideas in real-time, so the meeting feels dynamic.

While of course I miss being able to connect and collaborate in person, the team is embracing the changes and keeping the work moving forward.

Because we all have a shared willingness to embrace a new virtual style of working together, I’m confident we’ll all find answers to all of the functional questions we have about our evaluation plan in the coming weeks.

There is no “one size fits all” answer to how best to continue doing evaluation work in these uncertain times – but it’s clear that without creativity, flexibility, and courage, discovering solutions for these difficult questions would be impossible.

Here’s the big takeaway: while the pandemic has changed how we do program evaluation, it doesn’t change the fact that program evaluation is still important for organizations to make informed decisions about current and future programs.

Organizations owe it not only to current program participants (or grantees, in Metro’s case!), but also to future program participants to always remain dedicated and focused on continual program improvements.

Before I go, remember these 3 things:

A special thank you to the Metro Investment and Innovation team Suzanne Piluso and Laura van der Veer for your commitment to measuring program impact. Your dedication to collecting and using data to understand your program’s successes, challenges, and opportunities is powerful. I’m proud to work alongside of you to learn all we can about how the program has been working and to give you the tools to keep growing and improving!

To all those organizations and my fellow evaluators who remain committed to data driven decision making through these trying times… keep up the great work! We’ve got this!

Resources I’ve found especially helpful lately:

(Original blog post 2020, updated October 2025)

I struggle with the intersection of fundraising and program evaluation. The primary reason to do program evaluation is to learn, for continuous program improvement. It’s not a fundraising activity.

However, I’m often brought into an organization by a grant writer who wants my help to produce data that demonstrates the impact of their program. They usually want to create this specifically to present to a funder when applying for financial support.

While generating these kinds of reports can happen, before we can get to work the first thing I have to do is set context for the project.

Lesson #1 is this: program evaluation is not a public relations, marketing, or fundraising activity… it is first and foremost a learning opportunity. While program evaluation results may help secure funds, market programs, market operations, and overall boost communication efforts, it all starts with an interest in learning: what is going well? What needs improvement? What overall impact are we making?

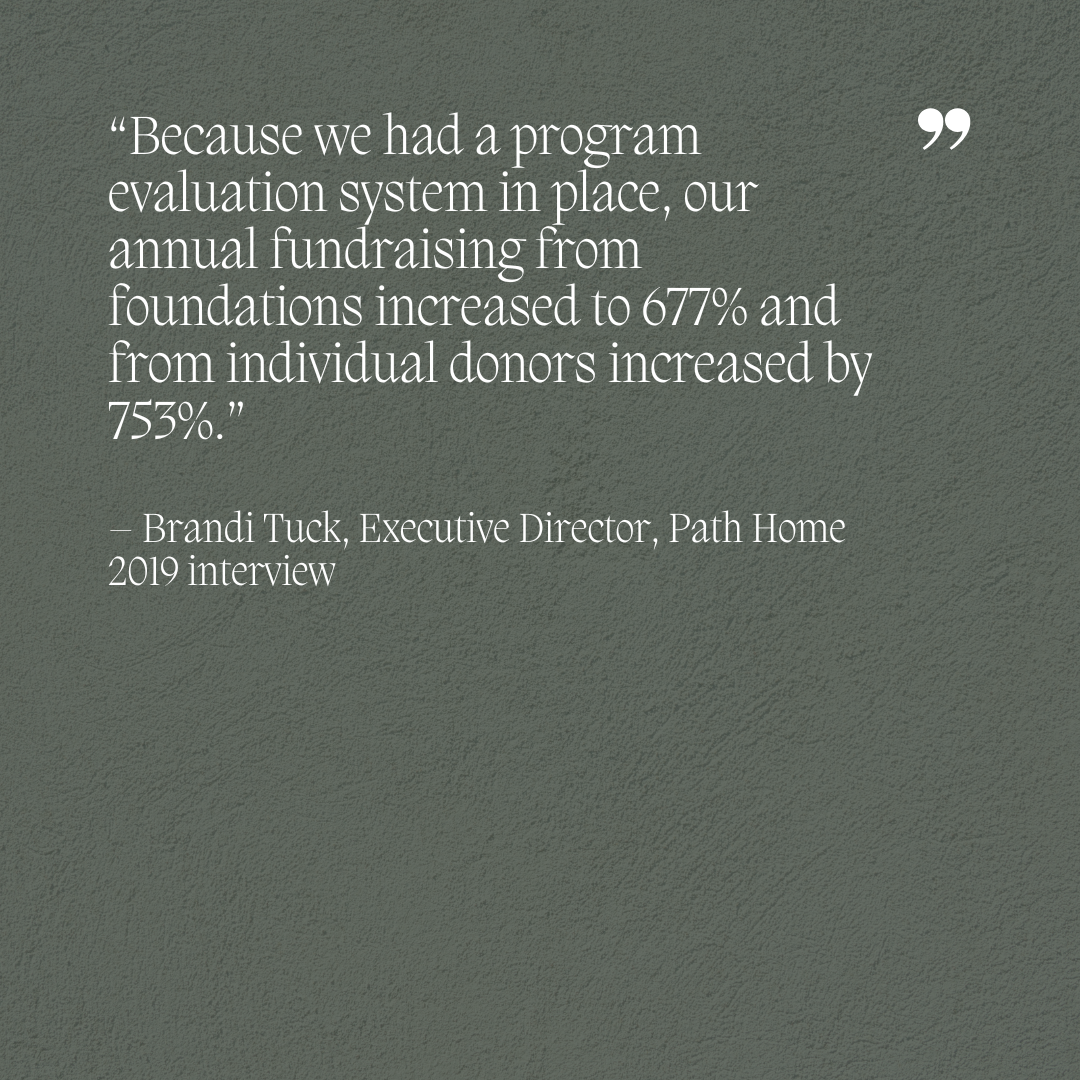

As a part of my book writing process on nonprofit program evaluation, I interviewed past clients for inclusion of their stories in the book. In 2019, I asked Brandi Tuck, the Executive Director of Path Home (formerly Portland Homeless Family Solutions), about how program evaluation has (or has not) had an impact on their fundraising efforts or revenue streams.

What she said knocked me off my seat:

“Because we had a program evaluation system in place, our annual fundraising from foundations increased to 677% and annual fundraising from individual donors increased by 753% (from previous levels).”

I was AMAZED – I honestly did not quite realize the incredible power that data has to turn into dollars.

How did this happen? Here’s what Brandi and I worked on together: we developed a process and outcome evaluation system for their Shelter program to learn what was going well and where improvements were needed. We also sought to generally understand the impact the program was making. The data that came out of this work demonstrated clearly both the impact and areas for ongoing program improvement.

By having these data easily accessible and available, the program staff were then able to not only improve the programs, but also to share with funders (and potential funders) the ongoing work the team was doing for the Shelter program.

This mini-case study shows that the key intersection of program evaluation and fundraising is usage. Along the program evaluation path, you may learn what works well and where you need to fine tune your process. When program evaluation and fundraising cross paths, it’s an opportunity for you to demonstrate the measurable impact your program is making while also providing current & future funders the chance to turn those data into dollars.

Interested in learning more about how you can explore program evaluation at your organization? Feel free to email me anytime – I’d love to help!

It isn’t a secret that program evaluation gets a bad rap.

In my day-to-day work, it’s common for program staff I come across to involuntarily roll their eyes or tense up by just hearing the term “program evaluation”. And honestly? After seeing staff in many organizations struggle to comply with harsh mandates from internal and external stakeholders to collect program data that may or may not have meaning to the program staff collecting them…. I am not surprised by this reaction.

As someone deep in this field of work, I’ve always felt a mix of empathy and frustration at this experience. How can we ease this tension, this uneasiness, this love/hate relationship with this important work?

I was inspired to think about this topic thanks to a wonderful talk I attended by program evaluation pioneer Dr. Michael Quinn Patton. He shared an informal study he completed on how people felt about program evaluation. The study asked participants to choose a photo that most closely matched their feelings toward program evaluation. Unsurprisingly (and unfortunately) the picture most people picked was of one person carrying an unbearable and unwieldy load of papers up a steep hill.

Dr. Patton put in plain terms what most of us in this field already know: most people still see program evaluation as an uphill battle. A burden. A hassle.

This got me thinking about how we can reverse this trend.

What if it’s not program evaluation that’s the problem – what if it’s just our perception of it?

Perhaps that explains why while we are seeing more and more position descriptions that describe (or directly state) that a key component of their work will be program evaluation, at the same time we are seeing less titles that incorporate the term “program evaluation”. Some common examples I’ve seen include….

What does this mean? Why does there seem to be a love/hate relationship with this important work?

I think that no matter what it’s called, the value of evaluation is undisputed. People understand that that this important work is a powerful opportunity for learning and organizational growth. But unfortunately, because of a bad rap, use of the word “program evaluation” is falling out of style simply because of the poor brand image associated with it.

In the end, do I think program evaluation needs to go through a re-brand? My answer is a resounding NO. The history, brand image, and long-term value goes too deep.

I think a better approach is to work to acknowledge, celebrate, and recognize the critical role program evaluation plays in knowledge acquisition, organizational learning, program improvement, and measuring impact.

Whatever we decide to call it, the connections between program evaluation and the benefits from integrating it into organizational operations are crucial to the ongoing growth and success of programs.

The field has and will continue to evolve. As it grows, new understanding and ways of putting the program evaluation principles into context will emerge that resonate differently with each organization who engages with it.

Whether you call it organizational learning, impact, knowledge management, or anything else, it still falls under the same umbrella of program evaluation. We aren’t going to successfully change program evaluation as a brand, but what we CAN do is try to understand the link between these new position titles and program evaluation as a whole.

Interested in learning more about how you can explore program evaluation at your organization? Feel free to email me anytime – I’d love to help!

Data visualization is based on the simple premise that we learn with our eyes.

In a fast moving world, you have a precious few seconds to really catch someone’s attention. I am not a graphic designer, and know that many talented designers exist who specialize in capturing the viewer’s attention right away.

Despite this, I can still leverage data visualization in all written communications, from evaluation plans to final reports. I am an evaluator AND I am an art director. And you can be too.

An art director understands the overall direction the graphic should take. In my evaluation work, that means my job is to create the content, sketch out graphic ideas, and then pass them along to a skilled graphic designer who can make my vision a reality. Ultimately, the goal is to create a visually pleasing way to logically and simply communicate the program model and outcomes we are working towards.

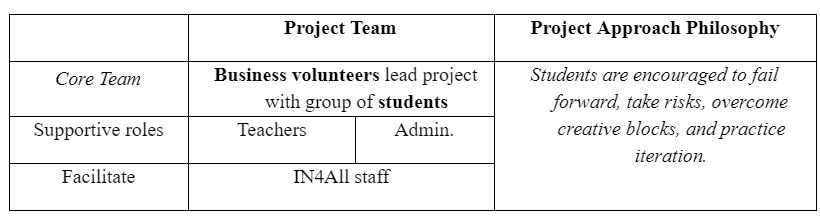

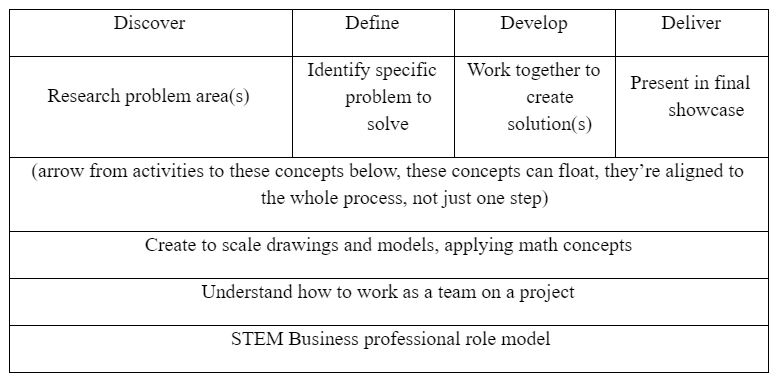

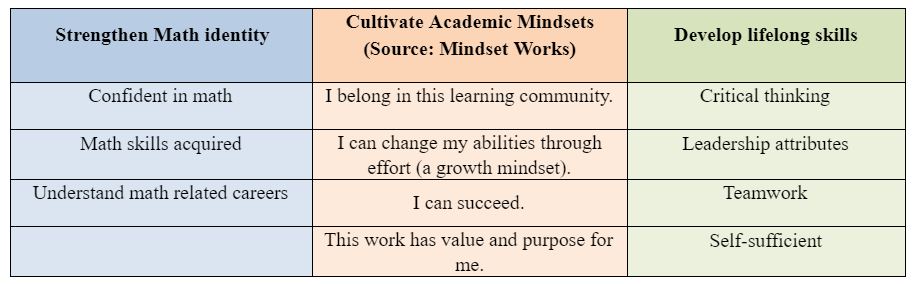

Let’s take my project with In4All’s middle school impact model for an example.

Step 1: First, I needed to collaborate with the In4All staff to identify they key concepts we wanted to include in the model: namely, defining what the program does and what change is expected as a result.

Step 2: The next step was for me to work with our graphic designer, Robyn Barbon from Folklore Media. I organized my findings from my meetings with In4All staff into the following design concept notes and presented it to her in a working session. I also sent her the organization’s logo, brand colors, and other brand style guidelines.

In4All Impact Model: Design Concept

Step 3, Final Product:Based on the design concept and a meeting with Robyn, she created the final product below. This model is the cornerstone for their program evaluation process.

Takeaways: The In4All impact model leverages visualization to engage readers with the content. Much more interesting than just tables, right?

You’re welcome to learn graphic skills yourself. But, don’t let the lack of graphic design expertise hold you back. Pair up with a graphic designer that can help you bring your ideas to fruition.

Interested in learning more about how you can channel your inner art director? Feel free to email me anytime – I’d love to help!

What does your program do? Answering this seemingly simple question is where the program evaluation planning process always starts.

To measure something, you first have to define it. When you apply an evaluative lens to a program, a new way of thinking about it emerges.

Take for example the work I recently completed with the Northwest Housing Alternatives (NHA) Resident Services Program.

The project goal was was simple: to improve how the team communicated with potential funders about the positive impact of their work in order to increase their chances for grant funding.

At the end of our 6-month project together, not only did the final concept paper lead to the NHA Resident Services Program securing two of their largest grants to date, but the process helped their staff develop the confidence and skills to gather data that’s relevant to their work AND to put that data to use to continually improve their programs.

To begin my work with them, I started with the NHA Resident Services program description: “Resident services provides ongoing coordination across 32 properties to promote housing stability.”

My evaluative brain was churning – How do these services promote housing stability? What are these services? How frequently did they occur? How did they decide what activities to implement?

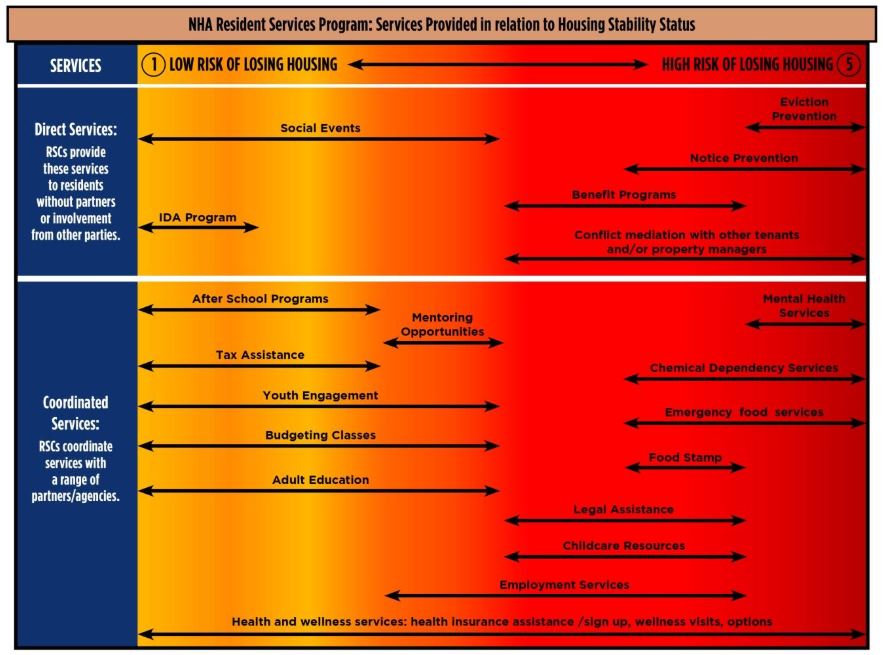

Phase 1 was a personal fact-finding mission. My goal was to look more deeply at what their activities actually were. I completed a document analysis and had many informational interviews and discussions with their staff. From this work, I learned the following:

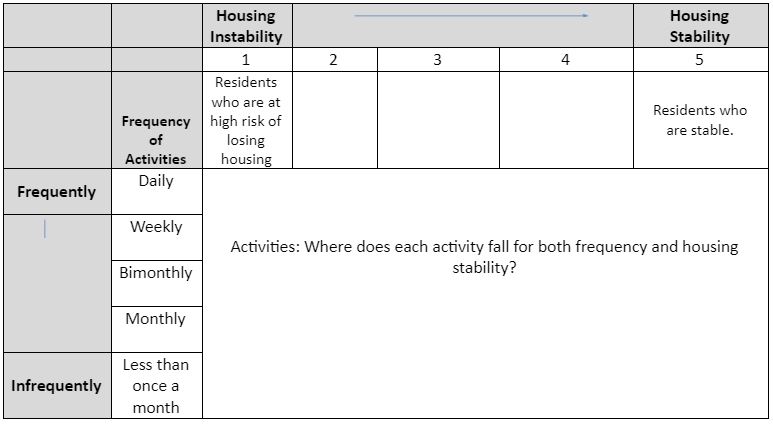

Next was Phase 2, which focused on group work. We all gathered for an evaluation planning session to dive deeper to answer the big question: what does this program do?

First, in small groups, the staff discussed the frequency of their activities as well as how each activity promoted housing stability. Each small group received a stack of 32 cards, each with one activity listed on it. The following table was put on a large wall, so each group could tape up each of the program’s 32 activities on where it fell in terms of frequency and the type of residents who received each service:

The figure below depicts the relationship between housing stability and the service activities that we identified during our work together.

Ultimately, what was most significant for the NHA Resident Services Program team’s experience going through the evaluation process was how they saw their work in a new way. The process helped them try new ways to answer the big questions about what their program does and why. It helped them uncover ways to improve and streamline their work to strengthen their program’s impact. Finally, it gave them a new way to more clearly and succinctly communicate how they approach their work to outside funders.

Click the image below to see the final concept paper:

Interested in learning more about how you and your team can benefit from program evaluation at your organization? Want to improve how you communicate the impact of your work to potential funders? Feel free to email me anytime – I’d love to help!

When some people hear the term logic model, a nervous quiver runs through their spine: flashbacks to a painful and frustrating logic model development experience.

Unfortunately, experiences like this have given this term a bad rap, so it’s OK to call it something else: program impact model, evaluation map, program planning and evaluation map, and so on.

Whatever you call it, the logic model tool is an important way to visually capture what your program does and the expected difference it will make.

Why? Because it provides insight about what methodology and measurements to use in evaluating your program. You’ll typically use logic models as a framework in discussing program evaluation with staff—it literally gets everyone on the same page.

Think of your logic model as the wind that powers the sails of your ship—it will determine where your program goes and the outcomes it will have.

Essential questions to consider when beginning the logic model development process:

Chari accurately captured the fundamental goals and mission of our organization and transformed our input into a clear evaluation process that helps us assess the impact of our programs on the lives of the families that we serve. Now we have an amazing way to measure the physical, emotional, and mental effects of our programs and to guide change, ensuring that we are delivering services in the most effective way possible.

Brandi Tuck, Executive Director, Path Home

Get periodic emails with useful evaluation resources and industry updates.

We promise not to spam you!